School Psychology Forum: Interpretations of Curriculum-Based Measurement

advertisement

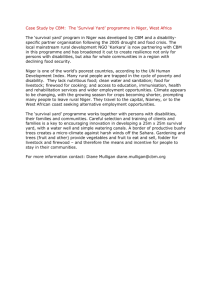

School Psychology Forum: R E S E A R C H I N VOLUME 1 P R A C T I C E • ISSUE 2 • PAGES 75–86 • SPRING 2007 Interpretations of Curriculum-Based Measurement Outcomes: Standard Error and Confidence Intervals Theodore J. Christ Melissa Coolong-Chaffin University of Minnesota Dr. Christ: I inserted some Abstract: Curriculum-based measurement (CBM) is a set of procedures uniquely suited comments and to inform problem solving and response-to-intervention (RTI) evaluations. The highlights prominence, use, and emphasis on CBM are likely to increase in the coming years throughout. I hope because of the recent changes in federal law (No Child Left Behind and the 2004 reauthorization of the Individuals with Disabilities Education Act [IDEA]) along with the they help. Keep in advent and growing popularity of RTI models (i.e., multitiered and dual discrepancy). mind, this was As a result of these changes in legislation, it is likely that CBM data will be used more written 8 years often to guide high-stakes decisions, such as those related to diagnosis and eligibility ago. Much of it still determination. School psychologists must remain ever vigilant leaders in schools to guide assessment and evaluation practices. That includes implementation and applies, but we advocacy for valid uses and interpretations of measurement outcomes. The authors now know more promote a perspective and methodology for school psychologists to reference the about RTI and the consistency, reliability, and sensitivity of CBM outcomes. Specifically, school interpretation of psychologists should reference standard error and report confidence intervals as they data. apply to estimates of both level and trend. A conceptual foundation and guidelines are presented to interpret CBM outcomes. Just skim. The big ideas matter more than the details. Overview In a previous article that was published in School Psychology Forum, Burns and Coolong-Chaffin (2006) described the three-tiered model for service delivery and assessment within a response-tointervention (RTI) framework. The article explained that the vast majority of students (>80%) should be served by a generalized set of Tier 1 services. A small subset of the student population ( 20%) should be served by the prevention and remediation services that comprise Tier 2. Finally, a very small subset of the student population ( 5%) should be served by the intensive and individualized remediation and support services that comprise Tier 3. According to the article, (a) the primary responsibilities of the school psychologist within an RTI model include the design and selection of both intervention-related and evaluation-related procedures, (b) triannual benchmarking and screening should occur for all students as part of Tier 1 services, (c) monthly assessment and evaluation should occur for students who are targeted for prevention and remediation as part of Tier 2, and (d) in the most critical cases ( 5%), weekly or daily assessments and evaluations should occur for students with intensive and individualized needs. An obvious question for school-based practitioners is what tools should be used to do this assessment. An examination of existing RTI models in the field reveals that curriculum-based measurement (CBM) procedures are commonly used to monitor student progress and make decisions about services (Fuchs, Fuchs, McMaster, & Al Otaiba, 2003; Fuchs, Mock, Morgan, & Young, 2003). CBM fits well within problem-solving and RTI models of service delivery. CBM procedures were established to assess the development of academic skills in the content areas of reading, mathematics, written expression, and spelling (Deno, 1985, 2003). These procedures might be used to assess either the level or trend of academic achievement. Level is typically estimated by the median value across multiple CBM administrations. Best practices suggest that three CBM probes should be administered on each of 3 or more days (Shinn, 2002). The median level of performance within each day is derived. The median of those values is used to establish the typical level of performance. The rate of academic development is often estimated with the line of best fit for a set of progress monitoring data. Progress monitoring data are typically collected once or twice per week for a period of 6 or more weeks. The slope—or steepness—for the line of best fit is used to establish the general rate of academic growth. These are the two most likely measurement schedules and interpretations when CBM is used to assess and evaluate the level and rate of academic achievement. A review of the RTI literature suggests that RTI evaluations will depend substantially on CBM data. Clearly, CBM procedures are useful and uniquely suited for use within RTI. However, there are some issues and potential cautions that should be considered before CBM data are used to guide decisions, especially within the upper tiers of RTI where the stakes increase greatly (e.g., CBM data are used to inform eligibility decisions). The issue of measurement precision should be considered before assessment outcomes are used to guide educational decisions. Although it is often appropriate to use brief and informal assessments to guide lowstakes decisions, there are more rigorous standards for assessments when they are used to guide highstakes decisions. Low-stakes decisions include routine classroom and screening decisions. Many Tier 1 decisions are relatively low stakes. High-stakes decisions include diagnosis and eligibility decisions. Many Tier 2 decisions and most Tier 3 decisions are relatively high stakes. The fundamental distinction is that the impact of a low-stakes decision is relatively short term and easily reversible, while the impact of a highstakes decision is more long term and potentially permanent. When data are used to guide high-stakes decisions then it is necessary to use caution and establish the requisite level of confidence before arriving at a decision. In part, this requires that the consumers of data be aware of the technical qualities of measurements. Those qualities include the reliability of measurement and the validity of their use. Although it is relatively common to hear that CBM is technically adequate or that CBM is reliable and valid, it is necessary to translate the outcomes of psychometric research so these outcomes can be applied at the point of interpretation. The content of this article is limited to the issues of reliability and measurement error and will provide the rationale and methods to reference standard error as part of CBM interpretations. This information is critical for any school-based professional who functions within a problem-solving or RTI framework. Dr. Christ: INFERENCE is central to data use. Consider what you actually observe and what you infer---we almost always go beyond the data with our interpretation, which is inference. Sampling and Reporting Achievement Using CBM Consumers of assessment data are less interested in how a particular student performs at a particular time when presented with a particular set of stimuli. Rather, consumers are interested in performance on multiple administrations within and across days with a variety of stimulus sets. Consumers use assessment outcomes to infer generalized performance across conditions. Curriculum-based approaches to assessment are designed to infer generalized performance within the context of a curriculum or instruction (Hintze, Christ, & Methe, 2006). It is inferred that student performance on CBM tasks provide useful estimates of generalized performance in the annual curriculum. Multiple measurements are usually necessary to ensure an adequate and representative sample of the stimulus materials and student performances within the annual curriculum. Those outcomes might be presented in either a graphic or numeric format. It is common to plot data graphically when multiple measurements are collected. That format is useful because it facilitates visual analysis of level, trend, and variability (Barlow & Hersen, 1984; Tawney & Gast, 1984). Once graphed, patterns are often self-explanatory and readily apparent without the use of statistics. In contrast, statistical presentations of assessment data are typically limited to only a single numeric NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 76 estimate of level (i.e., mean or median) or trend (i.e., slope). It seems that there might be a tendency to overlook estimates of variability when data are reported as a single numerical estimate. One purpose of this article, therefore, is to establish that it is limiting and potentially misleading to the consumer of data when estimates of stability, dispersion, or variability are left out. To that end, this article will address issues of standard error as they apply to estimates of both CBM level and growth. As discussed, the level and trend of student achievement were often derived with data collected across multiple administrations using either graphic or statistical analysis. There is no expectation that student performances would—or should—be identical across administrations. Variability and dispersion of performance is practically inevitable. Therefore, it follows that any single estimate of performance without reference to precision, variability, and dispersion is incomplete. Student performances across administration conditions will vary. The variability is, in part, a function of the inconsistencies related to the setting, stimulus materials, administrators, occasions, and student disposition. When making educational decisions using CBM data, it is critical, therefore, to accurately communicate the variability in those data. To fail to do so may lead to improper decisions that have high stakes for the students involved. Sensitivity, Reliability, and Consistency The consistency of measurement is often analyzed and discussed as reliability, which is, in many ways, the reciprocal of sensitivity. In other words, if a measure is highly sensitive then it is less likely to yield consistent or reliable results across repeated administrations. The research literature has established that CBM is a highly sensitive measurement procedure. CBM is sensitive to the characteristics of the administrator (Derr-Minneci, 1990; Derr-Minneci & Shapiro, 1992), setting/locations of the assessment (DerrMinneci, 1990), specification of the directions (Colon & Kranzler, 2006), task novelty (Christ & Schanding, 2007), probe type (Fuchs & Deno, 1992; Hintze & Christ, 2004; Hintze, Christ, & Keller, 2002; Shinn, Gleason, & Tindal, 1989a), assessment duration (Christ, Johnson-Gros, & Hintze, 2005; Hintze et al., 2002), stimulus content (Fuchs & Deno, 1991, 1992; Hintze et al., 2002; Shinn, Gleason, & Tindal, 1989), and stimulus arrangement (Christ, 2006a; Christ & Vining, 2006). Student performance on CBM tasks are likely to influence those and other characteristics of the measurement conditions. That sensitivity limits the utility of any narrow or specific point estimate. Instead, it is necessary to interpret any CBM outcome as one sample of behavior. It is necessary to collect multiple samples before an estimate of typical performance is derived. Moreover, any estimate of typical performance should reference the likely range of performances across repeated measurements. Variability is practically inevitable and, as such, it should be referenced when CBM outcomes are reported or interpreted to guide educational decisions. Empirical and Theoretical Dispersion Suppose CBM-Reading (CBM-R) were used to assess a second-grade student 100 times with no retest effects, as if the student’s memory of each test were immediately erased after each administration. There would be some variability in performance across measurement occasions. Variability is almost inevitable even if the conditions of measurement were well standardized and tightly controlled. That is especially true in the case of CBM, and CBM-like measures, that are highly sensitive to the variability in student performances within and across administrations (as discussed above). The designs of CBM procedures establish their sensitivity. Both the speeded metric, which is often highly sensitive to fluctuations in student performance, and the discrete, relatively small, unit of observation establish that sensitivity. For example, the metric for CBM-R is words read correct per minute (WRCM). That metric isolates a relatively small unit of behavior (i.e., word) that is easily observable and occurs frequently within the measurement duration. Although it might seem somewhat burdensome and unnecessary, oral reading fluency could be reported in lines of text read, sentences read, phrases, or clusters of five word units read. Each of those measurement units might yield more consistent, but less sensitive, measurement outcomes. NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 77 However, the convention is to use WRCM and, therefore, consumers of CBM-R outcomes should be familiar with the likely variability of student performances across repeated administrations. Figure 1 illustrates the possible distribution of performances for a single student across repeated measurements. The frequency of observed scores is greatest near the central point in the distribution, and the frequency of observed scores is least near the outer edges. The most likely performance approximates 20 WRCM, which is the arithmetic mean of the frequency distribution. The mean provides the best estimate of both previous performances and the best predictor of future performances. If the data in Figure 1 were used to predict future performance, then the best estimate is probably 20 WRCM and not either 10 WRCM or 30 WRCM. Figure 1. A frequency distribution of probable outcomes if CBM-R were to be repeatedly administered approximately 100 times. Test scores are reported in words read correct per minute. 16 14 Frequency 12 10 8 6 4 2 0 1 0 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 Test Score A brief reference to test theory will provide the context for the interpretation of variable performances. Dispersion Within Test Theor y Classical test theory (CTT) provides a simple model to conceptualize and evaluate the inconsistency of measurements across occasions. The observed score x is equal to the true score t plus the error e so that x = t + e. That CTT model was the basis for most test development for the last than 100 years (Crocker & Algina, 1986; Spearman, 1904, 1907, 1913). The model provides the conceptual foundation to interpret each observed score x as the sum of two theoretical components t + e. The true score should not be interpreted as a physical or metaphysical truth that is inherent to the individual. The preferred interpretation of the true score is as the theoretical mean of a large, or infinite, number of administrations. In the case of the data presented in Figure 1, the best approximation of the true score value might be 20 WRCM. The true score value is stable and does not fluctuate or change, although our estimates of true score might, so that if 100 more scores were added to the Figure 1 data set the mean and true score estimate might shift up or down. NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 78 In the proper sense, it is not really possible to observe or establish the true score value because, by definition, observations are contaminated by some degree of error. In that sense, error is simply the fluctuation and variability of observations around the true score. The best estimate of a student’s true score value is the mean of many observed scores. Recall that the consumer of assessment data is most likely interested in a generalized interpretation of the outcome. That is, the best estimate of likely performance across either actual or theoretical measurement occasions. The consumer is not interested in the particular performance at a particular time and within a particular set of circumstances. Instead, observed score values are most useful, and typically interpreted, as estimates of true score values. Dr. Christ: A "generalized interpretation" requires INFERENCE. In the case of CBM, the typical educational professional wants to know in general how the child is reading rather than how the child is reading in a particular time, place, using a particular probe, scoring method, and so on. The previous discussion established that variability in performances across actual and theoretical administrations should be expected. Actual administrations yield observed scores. Theoretical administrations do not yield observed scores, but provide a context to infer the range of likely performances across repeated measurements using psychometric analysis and CTT. In summary, no single measurement datum should be used to describe typical performance or estimate the true score value. Instead, best practices dictate that there should be reference to both an observed score and the likely variability. Estimates of variability can be derived from the results of many repeated measurements or derived psychometrically (American Educational Research Association, American Psychological Association, & National Council on Measurement in Education, 1999). There are two approaches used to estimate variability. The first is to administer a large number of assessments and derive the standard deviation or range of performances. The second is to administer fewer assessments and use derivations of standard error to estimate the likely magnitude of standard deviation and/or range of performances. Because of the time and resources involved in administering a large number of assessments in order to derive the standard deviation, the use of standard error is perhaps more appealing. If the purpose of measurement is to estimate the likely range of performance and true score values, then it seems more efficient and practical to collect fewer observations and rely on standard error. Standard Error Dr. Christ: Focus on the concepts and not the math. The explanation for how standard errors are derived is not very important. The standard error is the amount of variation or dispersion for error for measurements. There are at least two types of standard error that are relevant to the interpretation and use of CBM outcomes within an RTI model. The dual discrepancy model of RTI evaluation establishes that students are eligible for services when their response to low-resourced/typical services is substantially different than their peers (Fuchs, Fuchs, et al., 2003; Fuchs, Mock, et al., 2003). That is, students are eligible for specialized services when the level and trend of their response is (dual) discrepant for that of most other peers. If CBM outcomes are used to estimate the level and trend of student achievement, then there should be some reference to the standard errors that are relevant to each of these interpretations. The standard error of measurement (SEM) is combined with observed level to estimate the true level of student achievement. The standard error of the slope (SEb) is combined with observed slope to estimate the true slope of student achievement. Each of these types of standard error can then be used to derive a confidence interval. These confidence intervals can then be used in practice so that the consumer of the assessment data understands the variability of the measurement. NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 79 Calculate Standard Error of Measurement SEM is relatively easy to calculate. Only two statistics are required to calculate SEM from local normative data: (a) standard deviation (SD ) for performance across the students in the sample or population and (b) an estimate for the reliability of measurement (r ). SEM can then be calculated using the formula . It is relatively easy to construct spreadsheets that will automate this calculation (see Christ, Davie, & Berman, 2006). Researchers and practitioners can derive SEM locally or they might depend on generic estimates from the published literature (Christ, 2006b; Christ et al., 2006; Christ & Silberglitt, 2007). Generic estimates of CBM-R reliability coefficients have typically been within the range of .90 to .97 (Howe & Shinn, 2002; Marston, 1989). A review of the research literature might suggest that higher levels of reliability can be assumed when the conditions of administration and instrumentation are carefully controlled (e.g., .95 to .97). Estimates in the lower range might be used when the conditions of administration and instrumentation are not carefully controlled (i.e., .90 to .94). These are just guidelines based on the opinions of the authors. Estimates of reliability over a variety of contexts will become available in the literature in the upcoming years (based on the research of the first author), and these values are already reported in some technical manuals that accompany published CBM probe sets (e.g., AIMSweb). Previous research suggests that the standard deviation for within grade performance on CBM-R is likely to be within the range of 35–45 WRCM through the primary grades (Ardoin & Christ, in press; Christ & Silberglitt, 2007). Values in the lower (35–38), middle (39–41) and upper range (42–45) might be applied to the lower (first and second), middle (third), and upper grades (fourth, fifth, sixth), respectively. There will be some variation across populations. That is, districts, schools, and classrooms composed of more diverse populations in terms of academic performance might expect slightly larger estimates. Populations with less diversity might expect slightly smaller estimates. Standard deviations of local student performances within and across grades are easy to compute with standard spreadsheet software. The generic estimates for reliability and standard deviation can be combined to yield generic estimates of SEM. Recently published research suggests that the likely range of CBM-R SEM values is 6–13 WRCM (Table 1). The lower end of the range coincides with tightly controlled measurement conditions and the upper end of the range coincides with loosely controlled measurement conditions. The most generic estimates for CBMR administrations under well-controlled conditions might approximate 5–9 WRCM with the lowest estimates for lower primary grades and upper estimates for upper primary grades (Christ & Silberglitt, 2007). The resulting estimates of SEM can be used to guide interpretation and construct confidence intervals. Table 1. Standard Error of Measurement for Grades by Reliability: CBM-R Estimates of reliabilitya Low r Grade First Second Third Fourth Fifth SDb 30 34 39 39 41 .90 9 11 12 12 13 Higher r .94 7 8 10 10 10 .95 7 Dr. Christ: <-- Useful as 8 9 rules of 9 thumb 9 .97 5 6 7 7 7 Note. Standard error of measurements reported in words read correct per minute. a Test–retest reliability estimates reported in the professional literature. b SD = estimates of the typical magnitude of standard deviations for CBM-R within grades NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 80 Dr. Christ: The big idea in the next section is that conceptually, SEM is for level as standard error of slope (SEb) is for slope/trend. We use them in Standard Error of Slope the same way to estimate confidence intervals. It is likely that most school psychologists are familiar with the concept of SEM, and, therefore, it should be fairly simple to calculate and apply SEM to interpret CBM estimates of level. The standard error of the slope SEb might be less familiar and slightly more difficult to calculate. For these reasons, a more thorough explanation of SEb will be provided than was provided for SEM. In short, however, SEb is analogous to SEM and can be used a similar manner to guide the interpretation of CBM estimates of slope or growth (some details are glossed over to allow for a straightforward presentation). A linear, or trend, analysis is often used to estimate growth from CBM-R progress monitoring data. Of the available techniques (i.e., visual analysis, split-middle, regression), research has established that ordinary least squares regression is likely to yield the most accurate and precise estimates of growth (Good & Shinn, 1990; Shinn, Good, & Stein, 1989). The ordinary least squares value for slope (b) describes the rate of change in student performance. It is most common to report growth in weekly unit (7 days). In the early primary grades, students are expected to improve within the range of 1–2 WRCM per week with slightly less growth expected in the later primary grades (Deno, Fuchs, Marston, & Shin, 2001; Fuchs, Fuchs, Hamlett, Walz, & Germann, 1993). Once derived, the observed slope can be compared to the expected slope to evaluate the response to instruction and determine whether students are on pace to meet benchmark goals. Most spreadsheet software can be used to calculate slope using an ordinary least squares regression function. For example, the slope function can be used within Microsoft Excel. The days or dates comprise the x axis data and are listed either across a row or down a column. The corresponding CBM observations comprise the y axis data and are listed in the cells along side the corresponding dates when each datum was collected. The slope or linear function can then be used to estimate the rate of daily growth and corresponding statistics, which includes SEb. The estimate of daily growth and SEb are each multiplied by 7—for the 7 days per week—to yield weekly rates. For example, if the estimate for daily growth and SEb were .20 and .10 WRCM per day, then the weekly growth and SEb would equal 1.40 WRCM per week and .70 WRCM per week, respectively. The alternate approach is to estimate growth and apply published estimates of SEb. There are some generic estimates of SEb available in the research literature (Christ, 2006c). It seems that SEb is primarily influenced by the duration of progress monitoring and the standard error of the estimate (SEE). The SEE is the standard deviation of errors around the line of best fit for a particular set of progressmonitoring data. The line of best fit is defined as the straight/linear line that describes progress monitoring data. The line is essentially a continuous stream of point predictions, or likely estimates of true score values across time. Dr. Christ: We will talk about SEE in the training, but is it just the variability around the line of best fit. It was earlier established that observed scores are simply estimates of true score values. If multiple measurements were collected on each occasion then there would be some dispersion of observed score values. The mean, or central estimate, of those observed scores provides the best estimate of the true score. However, there are relatively few measurements collected at each point in time throughout progress monitoring duration. Therefore, the best estimate of true score values across time is provided by the straight/linear line that minimizes the discrepancy between predicted and observed values. The standard deviation of those discrepancies is the SEE. It is striking to note that the magnitudes of SEM and SEE converge. That is, research has established that SEM is likely to approximate 6–12 WRCM and SEE is likely to approximate 8–14 WRCM. Published estimates do not correspond exactly, but they are substantially similar. That is, the dispersion around observed and true score estimates corresponds across estimates of both level and trend. That observation lends credence to both sets of estimates and the recommended applications of standard error herein. It should be noted that estimates of SEb are smaller when progress monitoring durations are longer and when more data are available for evaluation. For example, Christ (2006b) observed that the median SEb after 2 weeks of progress monitoring was likely to approximate 7.96 WRCM (range = 1.59–14.33) and after 5 NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 81 weeks it was reduced to 2.10 WRCM (range = .42–3.78). The median was further reduced to .41 after 15 weeks (range = .08–.75). The implication is that more data and longer durations improve estimates of growth and improve confidence in the corresponding estimates. Christ also posited that well-controlled measurement conditions and well-developed instrumentation are likely to substantially reduce the magnitude of SEb. Confidence Interval Previous research provides evidence to support the validity and reliability of CBM measures (Good & Jefferson, 1998; Marston, 1989). However, it is difficult to translate the meaning of psychometric reliability to the interpretive process. That is, reliability coefficients can be reviewed to determine whether measurement procedures and instruments should be used to guide low-stakes screening-type decisions (r < .70) or high-stakes diagnostic/eligibility decisions (r < .90) (Kelly, 1927; Sattler, 2001). While checking information about the technical properties of a measure to make sure it has adequate reliability for a particular use is important, school psychologists should not interpret reliability estimates to establish that assessment outcomes themselves are either reliable or unreliable. Dr. Christ: This next statement is the BIG IDEA of this article. The reliability and precision of test scores is distributed across a continuum. No test is either reliable or unreliable. The most efficient way to translate the concept of reliability for interpretation is to calculate and use the SEM or SEb to construct a confidence interval. This allows consumer of the data to see how much variability is present and to determine how that variability has an impact on the decision-making process. Dr. Christ: There is a typo below "x +/- 6" should be "x +/- 10. Don't let that confuse you. As previously discussed, estimates of SEM can be derived from either local normative data or from published estimates. Estimates of SEb can be gleaned from the research literature or be calculated directly from student-specific datasets. In either case, the confidence interval is a multiple of the standard error. A 68%, 90%, or 95% confidence interval is constructed by multiplying the SEM by 1.00, 1.68, or 1.96, respectively. Therefore, assuming a SEM of 10, the confidence interval (68%) is equal to x +/- 6; the confidence interval (90%) is equal to x +/- 10; and the confidence interval (95%) is equal to x +/- 12. If the observed CBM-R performance were 85 WRCM, then there is a 68% chance that the individual’s true level of performance is within the range of 79–91 WRCM, a 90% chance that their true level of performance is within 75–95 WRCM, and a 95% chance that it is within 73–97 WRCM. These confidence intervals provide both a likely range of performance across repeated administrations and an interval to estimate the true level of typical performance (i.e., true score). Confidence interval can also be calculated around estimates of growth using SEb in multiples of 1.00, 1.68, and 1.96 for 68%, 90%, and 95% confidence intervals, respectively. Application and Use CBM-R frequently demonstrates test–retest reliability above .90. However, it is unclear how such estimates should be applied during interpretation when CBM-R data are used to guide educational decisions. For example, suppose the following set of hypothetical facts: (a) you are part of a problem-solving team who must determine which students should receive early intervention services, (b) the cutoff for early intervention services is established at the 15th percentile, and (c) in the fall of second grade the 15th percentile of CBM-R performance is 20 WRCM. It is difficult to determine the appropriate level of confidence that should be placed in scores falling in the range of 15–25 WRCM. How much confidence should the problem-solving team invest in those CBM-R outcomes? Are additional assessments necessary? As previously discussed, these CBM-R outcomes are estimates of typical performance that would likely change if students were assessed on an alternate day or with an alternate passage. A best practices approach to CBM-R interpretation requires that the problem-solving team consider the likely magnitude of variation across actual or theoretical administrations. Actual variation could be observed by administering multiple CBM-R on multiple occasions. In that case the observed range across a moderate number of NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 82 administrations could be reported to estimate the magnitude of variability in performance. Those data might be reported with a statement such as: When administered three second-grade CBM-R probes on each of 3 days, Jason performed an average 20 WRCM with a range between 13 and 27 WRCM. That statement communicates both an estimate of central tendency and the variability in student performance across multiple assessments. Similarly, to communicate the student’s rate of growth over time, the actual slope (calculated using software such as Excel) can be reported with a statement such as: When monitored weekly across 15 weeks using second-grade CBM-R probes, Jason gained an average of two words per week. However, the observed slope should also be recognized as an estimate of the true slope, and this impreciseness should be communicated as well. Using SEb values calculated from the observed data or published estimates of SEb corresponding to the number of weeks of data collection, measurement conditions, and SEE (Table 2), an appropriate interpretation might be: When monitored weekly across 15 weeks using second-grade CBM-R probes, Jason gained between 1.59 and 2.41 words per week. This information could also be communicated using a confidence interval around estimates of growth using SEb in multiples of 1.00, 1.68, and 1.96 for 68%, 90%, and 95% confidence intervals, respectively. When data from multiple administrations are not available to observe the range of performance across multiple assessment occasions, then school psychologists can rely on estimates of SEM, which provide the range of likely performance across multiple theoretical administrations. Those data might be reported with a statement such as: When administered three second-grade CBM-R probes on a single day, Jason’s typical level of performance was estimated to be 20 +/- 6 WRCM. That statement communicates both the median level of performance (i.e., 20 WRCM) and the SEM (i.e., 6 WRCM), which translates to a 68% confidence interval (i.e., 14–26 WRCM). Other more technical and precise language could also be used to communicate that there is a 68% chance that Jason’s true score falls within +/-6 WRCM of his observed performance. Regardless of the language used, it is important to communicate that no individual observation or test score represents the stable and absolute level of an individual’s true score/performance. The procedures to estimate SEM and construct confidence intervals are provided above. Summary CBM comprises a set of measurement procedures that are uniquely suited to inform problem solving and RTI. The prominence, use, and emphasis on CBM are likely to increase in coming years due, in part, to the 2004 reauthorization of IDEA and the advent of RTI models (i.e., multitiered and dual discrepancy). While, CBM has historically been used to guide low-stakes classroom and screening-type decisions, it is likely that CBM will be used to guide high-stakes diagnosis and eligibility-type decisions in the future. As this higher stakes use of CBM data comes into daily practice, school psychologists much remain ever vigilant that they promote best practices in test score interpretation. In part, that requires some reference to the consistency, reliability, and sensitivity of measurement outcomes. School psychologists should reference and report confidence intervals to support the valid use and interpretation of measurement outcomes. Failing to present data in such a manner would likely result in misinterpretation of results, thereby leading to inappropriate decisions where the impact is harder to correct as the stakes get higher. School psychologists should consider the likely magnitude of dispersion and variability when the typical level of CBM performance is reported. Three potential solutions were offered here: (a) repeatedly assess students across days with multiple probes and report performance in a graphic format that supports visual analysis of level and variability; (b) repeatedly assess and report the range of performance across days and probes and report performance in a numeric format, which might include an average score and standard deviation; and (c) administer fewer assessments and report the SEM. A similar set of options are available when growth, trend, or slope is estimated. More measurement and a more robust dataset are often preferred. However, there is a resource allocation issue so that a balance must be struck between the available resources, how these resources are allocated, and the frequency of measurement. Reference to NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 83 NASP School Psychology Forum: Research in Practice 6.18 7.06 7.95 3.53 4.41 5.30 .88 1.77 2.65 3 4.07 4.65 5.23 2.32 2.91 3.49 .58 1.16 1.74 4 2.94 3.36 3.78 1.68 2.10 2.52 .42 .84 1.26 5 2.25 2.57 2.89 1.28 1.61 1.93 .32 .64 .96 6 1.79 2.05 2.30 1.02 1.28 1.54 .26 .51 .77 7 1.47 1.68 1.89 .84 1.05 1.26 .21 .42 .63 8 1.24 1.41 1.59 .71 .88 1.06 .18 .35 .53 9 1.06 1.21 1.36 .61 .76 .91 .15 .30 .45 10 .92 1.05 1.18 .53 .66 .79 .13 .26 .39 11 .81 .92 1.04 .46 .58 .69 .12 .23 .35 12 .72 .82 .92 .41 .51 .62 .10 .21 .31 13 .64 .74 .83 .37 .46 .55 .09 .18 .28 14 .58 .66 .75 .33 .41 .50 .08 .17 .25 15 Note. Standard error of the slope reported in words read correct per minute per week. a Estimates based on the assumption that two data points are collected weekly. b Reported for weekly standard errors of the slope (SEb = SEE/[sddays*√ n]). c SEE is the average standard deviation in words read correctly per minute from predicted CBM-R performances along the line of best fit. d Qualitative descriptor for measurement conditions based on the authors’ subjective evaluation. 11.14 12.74 14.33 6.37 7.96 9.55 Moderated 8 10 12 Poord 14 16 18 1.59 3.18 4.78 2 Optimald 2 4 6 SEEc Weeks of progress monitoring a, b Table 2. Standard Error of the Slope Estimate by Progress Monitoring Duration in Weeks: CBM-R Dr. Christ: Don't worry about this table. It is more technical than you need. Interpreting CBM Outcomes 84 standard error and confidence intervals might help guide decisions regarding the frequency of measurement and issues of interpretations. In the end, CBM is only one source and method of data collection. A multisource and multimethod approach is necessary to guide high-stakes decisions. The convergence of evidence across sources and methods is the critical factor. That does not, however, relieve the burden to promote the most valid interpretations and applications of measurement outcomes, including those collected with CBM procedures. Dr. Christ: Now, wasn't that easy? We will discuss all this in the training. References American Educational Research Association, American Psychological Association, & National Council on Measurement in Education. (1999). Standards for educational and psychological testing. Washington, DC: American Educational and Psychological Research Association. Ardoin, S. P., & Christ, T. J. (in press). Evaluating curriculum-based measurement slope estimate using data from triannual universal screenings. School Psychology Review. Barlow, D. H., & Hersen, M. (1984). Single-case experimental designs: Strategies for studying behavior change (2nd ed.). New York: Pergamon Press. Burns, M., & Coolong-Chaffin, M. (2006). Response-to-intervention: The role of and effect on school psychology. School Psychology Forum: Research in Practice, 1, 1–13. Christ, T. J. (2006a). Curriculum-based measurement math: Toward improving instrumentation and administration procedures. Paper presented at the annual meeting of the National Association of School Psychologists, Anaheim, CA. Christ, T. J. (2006b). Does CBM have error? Standard error and confidence intervals. Paper presented at the annual meeting of the National Association of School Psychologists, Anaheim, CA. Christ, T. J. (2006c). Short-term estimates of growth using curriculum-based measurement of oral reading fluency: Estimates of standard error of the slope to construct confidence intervals. School Psychology Review, 35, 128–133. Christ, T. J., Davie, J., & Berman, S. (2006). CBM data and decision making in RTI contexts: Addressing performance variability. Communiqué, 35, 29–31. Christ, T. J., Johnson-Gros, K., & Hintze, J. M. (2005). An examination of computational fluency: The reliability of curriculum-based outcomes within the context of educational decisions. Psychology in the Schools, 42, 615–622. Christ, T. J., & Schanding, T. (2007). Practice effects on curriculum based measures of computational skills: Influences on skill versus performance analysis. School Psychology Review, 36, 147–158. Christ, T. J., & Silberglitt, B. (2007). Curriculum-based measurement of oral reading fluency: The standard error of measurement. School Psychology Review, 36, 130–146. Christ, T. J., & Vining, O. (2006). Curriculum-based measurement procedures to develop multiple-skill mathematics computation probes: Evaluation of random and stratified stimulus-set arrangements. School Psychology Review, 35, 387–400. Colon, E. P., & Kranzler, J. H. (2006). Effect of instructions on curriculum-based measurement of reading. Journal of Psychoeducational Assessment, 24, 318–328. Crocker, L., & Algina, J. (1986). Introduction to classical and modern test theory. Orlando, FL: Harcourt Brace. Deno, S. L. (1985). Curriculum-based measurement: The emerging alternative. Exceptional Children, 52, 219–232. Deno, S. L. (2003). Developments in curriculum-based measurement. Journal of Special Education, 37, 184–192. Deno, S. L., Fuchs, L. S., Marston, D., & Shin, J. (2001). Using curriculum-based measurement to establish growth standards for students with learning disabilities. School Psychology Review, 30, 507–524. Derr-Minneci, T. F. (1990). A behavioral evaluation of curriculum-based assessment for reading: Tester, setting, and task demand effects on high- vs. average- vs. low-level readers. Dissertation Abstracts International, 51, 2669. Derr-Minneci, T. F., & Shapiro, E. S. (1992). Validating curriculum-based measurement in reading from a behavioral perspective. School Psychology Quarterly, 7, 2–16. NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 85 Fuchs, D., Fuchs, L. S., McMaster, K. N., & Al Otaiba, S. (2003). Identifying children at risk for reading failure: Curriculum-based measurement and the dual-discrepancy approach. In H. L. Swanson, K. R. Harris, & S. Graham (Eds.), Handbook of learning disabilities (pp. 431–449). New York: Guilford Press. Fuchs, D., Mock, D., Morgan, P. L., & Young, C. L. (2003). Responsiveness-to-intervention: Definitions, evidence, and implications for the learning disabilities construct. Learning Disabilities Research & Practice, 18, 157–171. Fuchs, L. S., & Deno, S. L. (1991). Paradigmatic distinctions between instructionally relevant measurement models. Exceptional Children, 57, 488–500. Fuchs, L. S., & Deno, S. L. (1992). Effects of curriculum within curriculum-based measurement. Exceptional Children, 58, 232–242. Fuchs, L. S., Fuchs, D., Hamlett, C. L., Walz, L., & Germann, G. (1993). Formative evaluation of academic progress: How much growth can we expect. School Psychology Review, 22, 27–48. Good, R. H., & Jefferson, G. (1998). Contemporary perspectives on curriculum-based measurement validity. In M. R. Shinn (Ed.), Advanced applications of curriculum-based measurement (pp. 61–88). New York: Guilford Press. Good, R. H., & Shinn, M. R. (1990). Forecasting accuracy of slope estimates for reading curriculum-based measurement: Empirical evidence. Behavioral Assessment, 12, 179–193. Hintze, J. M., & Christ, T. J. (2004). An examination of variability as a function of passage variance in CBM progress monitoring. School Psychology Review, 33, 204–217. Hintze, J. M., Christ, T. J., & Keller, L. A. (2002). The generalizability of CBM survey-level mathematics assessments: Just how many samples do we need? School Psychology Review, 31, 514–528. Hintze, J. M., Christ, T. J., & Methe, S. A. (2006). Curriculum-based assessment. Psychology in the Schools, 43, 45–56. Howe, K. B., & Shinn, M. M. (2002). Standard Reading Assessment Passages (RAPs) for use in general outcome measurement: A manual describing development and technical features. Retrieved April 11, 2007, from www.aimsweb.com Kelly, T. L. (1927). Interpretations of educational measures. Yonkers, NY: World Book. Marston, D. B. (1989). A curriculum-based measurement approach to assessing academic performance: What it is and why do it. In M. R. Shinn (Ed.), Curriculum-based measurement: Assessing special children (pp. 18–78). New York: Guilford Press. Sattler, J. M. (2001). Assessment of children: Cognitive applications (4th ed.). San Diego, CA: Author. Shinn, M. R. (2002). Best practices in using curriculum-based measurement in a problem-solving model. In A. Thomas & J. Grimes (Eds.), Best practices in school psychology IV (pp. 671–698). Bethesda, MD: National Association of School Psychologists. Shinn, M. R., Gleason, M. M., & Tindal, G. (1989). Varying the difficulty of testing materials: Implications for curriculum-based measurement. Journal of Special Education, 23, 223–233. Shinn, M. R., Good, R. H., & Stein, S. (1989). Summarizing trend in student achievement: A comparison of methods. School Psychology Review, 18, 356–370. Spearman, C. (1904). The proof and measurement of associations between two things. American Journal of Psychology, 15, 72–101. Spearman, C. (1907). Demonstration of formula for true measurement of correlation. American Journal of Psychology, 18, 161–169. Spearman, C. (1913). Correlations of sums and differences. British Journal of Psychology, 5, 417–426. Tawney, J. W., & Gast, D. L. (1984). Single subject research in special education. New York: Merrill. NASP School Psychology Forum: Research in Practice Interpreting CBM Outcomes 86